Social Media Monitoring for Democracy (Part I): Getting Comfortable with the Concepts

Social media monitoring is an essential tactic for many pro-democracy initiatives. In order to find out what is being said on social media and by whom, find coordinated information campaigns, and identify and respond to online threats in a timely manner, democratic actors need tools and methods to get data from social media platforms and analyze it.

However, before embarking on social media monitoring, it is essential to consider the local context. Different social media platforms are dominant in different communities and regions, and it is important to understand the local information environment. This can be done using tools such as the MIT Media Cloud, a news aggregation tool, to explore trends beyond social media. It is also important to research the local languages, relevant laws, norms, and commitments, as well as civil society resources that can support your work, such as the Design 4 Democracy Coalition. Tools and regulations for access will be different depending on the platform, and analytics for encrypted messaging apps are extremely limited.

Data Collection

The first step in data collection is determining which platforms, websites, and news organizations you wish to monitor, as well as which themes, narratives, hashtags, and keywords are relevant.

There are three types of data collection approaches:

- Application Program Interface (API): Working directly with a platform’s API allows researchers to process a large amount of data quickly. Each platform runs their API differently, but most allow researchers to collect real time data, called streaming, which means that after a search is entered new posts, which fit the search restrictions, will be collected. Some platforms also allow researchers to collect historical data, searching through data that was on the platform before the request was made. APIs limit how far back a historical search can go (though Pushshift.io is a good resource if this is problematic for you; it uses an API to archive data from major platforms), the type of data researchers can access (for example the IP address of a post is unlikely to be available because of the privacy concerns this would cause), the volume of data, and the rate of download. Additionally, posts and profiles that have been removed for violating codes of conduct are not available. Furthermore, while some APIs are publicly available, others require authentication before access is granted.

- Third party collection tool: Tools like Twitonomy and Meltwater interact with the platform API and present the information in an easy-to-read manner. They are also built to easily export data in other formats, such as CSV. However, a number of these tools are very expensive (Sysomos, Brandwatch), and Meta, which does not allow access to Facebook’s API, is increasing barriers to access to CrowdTangle, Facebook’s third party API access tool, with no timeline for when onboarding will reopen or a new tool will be introduced. Twitter has also recently announced that it will no longer support free access to its API for researchers.

- Web scraping: Web scraping extracts the source code from a target website and mines data, but it is often illegal and/or violates platforms’ Terms of Service.

Data Analysis

Before analyzing the data, it can be helpful to prune, or remove superfluous data. Gephi and Graphika are two tools that use proprietary software to build data pruning into their data analysis workflows.

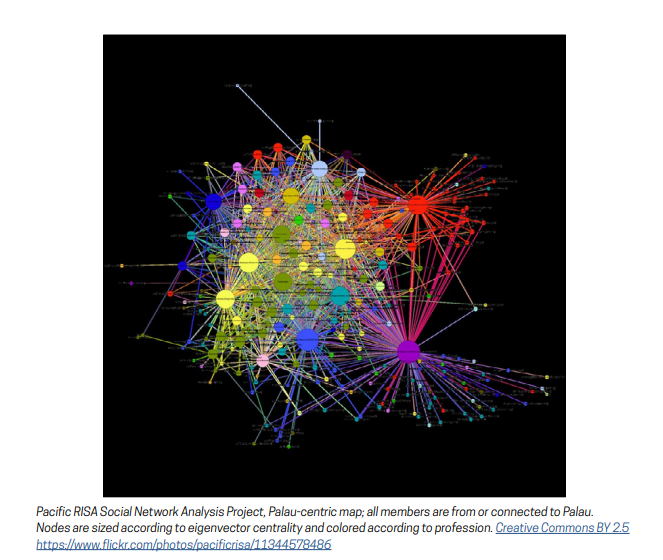

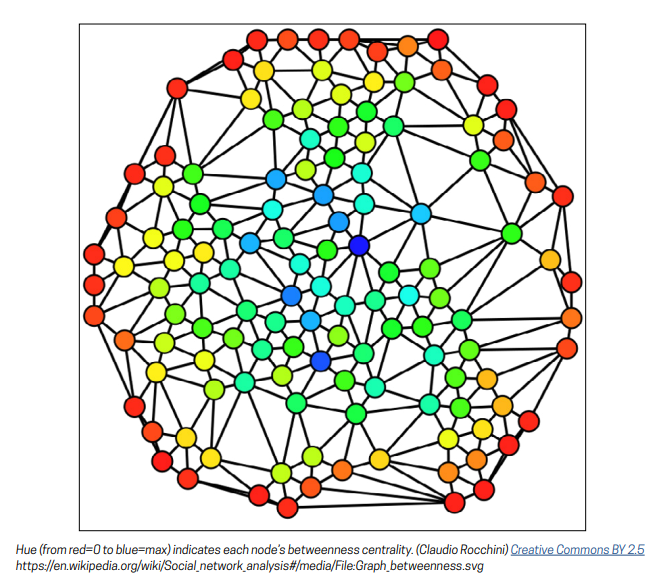

A number of methodologies can be used to analyze data. Social network analysis uses a map of connections between profiles to determine influence. Influence within social networks can be conceptualized as content-based, which examines hashtags, websites, and information narratives to determine narratives and then examines related interactions to determine effectiveness/spread, or actor-based. Actor-based influence analysis methods use connections between profiles, groups, posts, and websites to unearth coordinated networks. There are three types of centrality (how influential a profile, website, or post is); degree centrality (the number of connections it has), betweenness centrality (reflects the capacity to spread messages quickly), and eigenvector centrality (reflects who influences the influencers). Tools like Gephi can calculate these measures of centrality.

Other methods of analysis include linguistic analysis, keywords and lexicons (some of which can be found online, for example PeaceTech Lab and Hatebase.org offer several multilingual lexicons), narrative analysis, qualitative coding, and quantitative linguistic analysis (natural language processing). Term frequency-inverse document frequency (tf-idf) can then compare the frequency of these words and phrases in different documents. Hate speech is particularly challenging to collect and analyze data on in part because lexicons need to develop so quickly to keep up with language trends, but Google Jigsaw’s Perspectives API uses machine learning to automatically calculate toxicity scores. While this tool is open source, it is currently only available for English language content.

Data analytics for social media monitoring can provide your organization with clean, meaningful data. You can identify malign narratives, determine where interventions will have the most impact, and find trends to better understand the social media landscape. Social media monitoring is a powerful tool for democracy.

This blog is the first in a two-blog series. Please keep an eye out for part two, which will delve into more detail about what monitoring is possible with different social media platforms and monitoring tools. For a more detailed guide to the world of social media monitoring, including data collection and analytics, check out NDI’s Guide on Data Analytics for Social Media Monitoring.