User Experience Testing

As anyone who has spent time in tech knows, “designing good software” is as complicated as the term is simple. It’s easy to come up with ideas and improvements for your products. But the actual implementation is full of pitfalls that keep you from a high quality product. Buggy code will make your product unusable; feature creep can make it overly complex; and poor user experience (UX) design can make it impossible to navigate.

These headaches can seem insurmountable when even the most carefully tested code is met with numerous bug reports upon release, or the most well thought out design receives a stream of complaints. But the key to solving these issues lies in changing processes to align more closely with a human-centered design model. The underlying idea behind this philosophy is that since software is created for the benefit of the users, your metrics of success should be intrinsically tied to how users experience your software. You as the designer will never be able to fully anticipate what your user wants or how they will interact with your software. Instead of guessing what the user wants, you need to rely on input from the users themselves to determine how best to develop your product.

This is the basic idea behind user experience testing: if you want to understand how your software is actually being used and what improvements users actually want and need, watch them use it. Some information can be collected by sending out surveys to users or regularly collecting feedback, and site analytics also play a valuable role in capturing users’ behavior. But certain information can only be collected by watching users actually use a product, and being able to directly interact with them and ask questions as they do so. Our recent goal at NDItech has been to go through each of our products and perform these tests in order to better understand our users’ needs and what improvements we can make, starting with Apollo, our elections tool.

In order to conduct a user experience test, the first step is to put together a list of tasks that someone would typically perform using your software. Once you bring in a user for testing, you explain the process to them and ask some introductory questions identifying what kind of user they are. In our case, our users could be grouped into two categories: data clerks, who are in charge of inputting observation data into the Apollo database during elections, and data administrators, who set up and oversee the database. After the introductory phase, you simply give the user the tasks to perform one at a time, and tell them to explain out loud what they are doing as they go about performing the task.

It is this interaction that makes user experience testing powerful. As the user hesitates on a screen, you can ask them what they are thinking, looking for, or are expecting to see on this screen. By doing this, you find out what options and features the user is expecting to see at certain points, and can adjust your software accordingly. You are also present to watch the finer points of their behavior. Where do they instinctively move their mouse when they land on a page? Are they able to immediately able to find the right link, or do they pause or have to hover over several menus first? Ideally, you want several people present at a user experience testing so that each one can focus on a particular task such as interacting with the user, recording the movements of the user, and noting general observations. With enough attention to detail, you may pick up on user instincts that even they aren’t aware of.

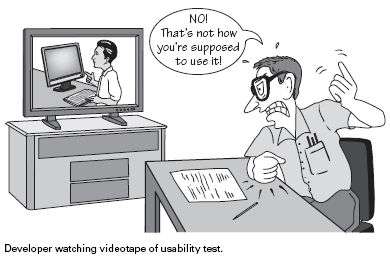

In our case, the user experience testing was incredibly enlightening. When you use a product enough times, you naturally know where certain features are located, and it was good to realize how many were unnecessarily hidden away and more difficult to find for someone with less experience. There were some issues that I had actually experienced when learning the software but had failed to take note of. By seeing how our users navigated pages, we could see what buttons and features should be made more prominent, and which ones were simply cluttering the screen. We also found cases where the labeling of buttons confused users and caused them to use the features incorrectly. Hours after the user experience testing, our product manager Noble was already full of ideas for improvements and fixes for the next version of Apollo.

User experience testing isn’t going to give you all the answers for software design, but it’s a powerful tool to have nonetheless. You can scheme all you want about what the most important features are and how best to lay out your product, but there needs to be some kind of reality-check with the user. What you thought would be an excellent feature might not fit into a user’s workflow, while what seemed to be an easy flow for completing a task could be mystifying to the user. In order to understand what users are experiencing and thinking as they use your product, you need to be there talking to them as they use it. If done right, the result is a guide for making a more intuitive and user-friendly experience. We’re all ready to look into redesigning Apollo, with one team member remarking that we learned more in those 60 minutes than we did in 6 months.